Блог:ROSA Planet

A technical blog of ROSA Laboratory.

All content is published under Creative Commons Attribution-ShareAlike 3.0 License (CC-BY-SA)

Please, subscribe to RSS/Atom feed. If you have any questions do not hesitate to contact us

NrjQL kernel - the "heart" of ROSA

Kernel is the core of any Linux distribution and it is the kernel that gave name to all these systems. Linux kernel contains a lot of configuration options and you can get very different kernel images from the same source code. In addition, there are a lot of patches all over the worlld that are not included to the main development tree but that can be interesting for certain groups of users. So it is no wonder that different Linux distributions even if based on the same version of source code from Linux Torvalds provide users with quite different kernels,

In ROSA, we use the kernel variations initially created by the MIB (Mandriva International Backports) group and named nrj and nrjQL. What do these names actually mean and what features stand behind them? Let's listen to Nicolò Costanza who builds kernels for ROSA Desktop distributions:

Technically speaking, with the "NRJ" configs the CPU and RCU are configured with full Preemption enabled (CPU Preemption, RCU Preempt tree) and in full Boost mode. The "QL" mode also adds the CK1 patchset including BFQ disk I/O scheduler and BFS CPU load scheduler. One should also mention the presence of UKSM for a better memory manager and TOI for better suspension and hibernate functionality, each designed with desktop system performance and responsiveness in mind. So we really try to do everything to obtain a feeling close to realtime kernel from user point of view.

What are the roots of the name?

We looked for a small nickname (2 to 4 chars) for our kernel variation. Several names were proposed and at the end the most of MIB people liked NRJ, Amongst the another proposals there was kernel-viagra, but this was rejected:) The NRJ word actually stands for ENERGY and should underly that the kernel is acting for a PC like an energetic drink do for a human being, like a RED BULL, but we could not adopt that name or another registered trademark. In the case when PC is very stressed by different tasks but a slow response is unacceptable, NRJ try to offer the missing ENERGY to your PC. With NRJ the PC is more responsible in a multitasking experience.

So here is the short description of the kernel used in ROSA.

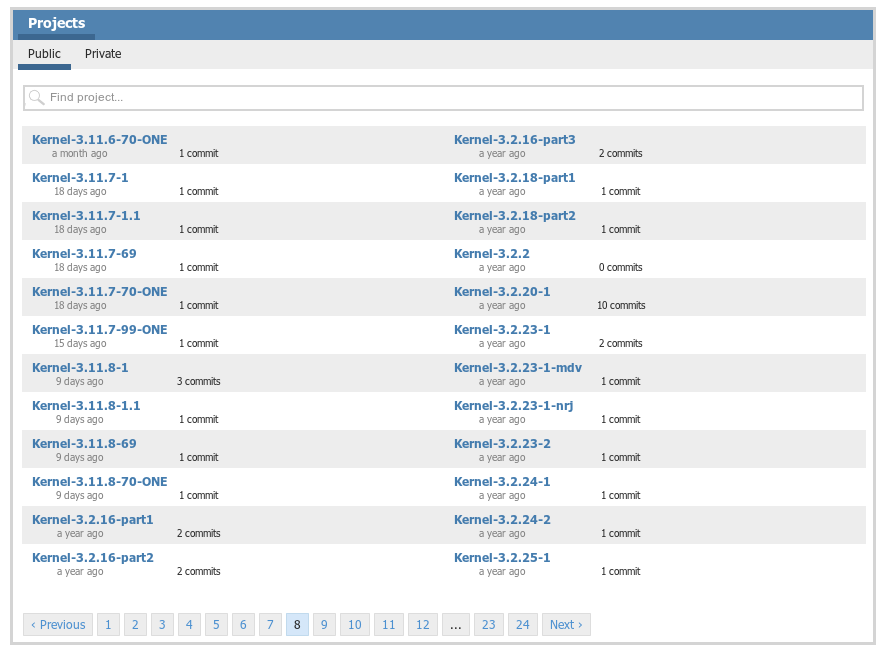

Actually we have several variations of the kernel - Nico always builds at least vanilla, nrj-laptop and nrj-desktop editions. The process of Nico's work can be always tracked at Nico's repository in ABF. Finally, more details about history of nrjQL patches in ROSA can be found in MIB forum.

Linux Kernel ABI Tracker

The Linux kernel is constantly evolving: improved hardware support, optimized various kernel subsystems, etc. As a result, the size of the kernel and the number of its ABI/API interfaces is growing from version to version. Thus, in the course of development, some of the old interfaces are subject to change, which may break the operation of other software components using kernel interfaces: kernel modules, drivers, dynamic testing/trace tools, etc.

For regular automatic analysis of changes in Linux kernel interfaces we have developed the Kernel ABI Tracker. This tool is looking for new versions of the kernel at kernel.org, building them and analyzing API changes using a set of basic tools. For each version of the kernel the tracker creates a so-called ABI dump from its debug-information using the ABI Dumper tool. ABI dump contains information about all public interfaces exported by the kernel: their properties, parameter types and the structure of data types. And then a pair of ABI dumps for two consequent kernel versions are passed to the ABI Compliance Checker tool in order to analyze changes and create the report. This report describes changes in the kernel API interfaces (added and removed symbols, changes in data types, etc.) and divides them by the severity level for applications. In addition to its direct purpose, ABI dumps may also be used for other kinds of analysis by third-party developers and are therefore available for download.

The tracker provides reports on the results of testing defconfig-configuration of all the latest longterm, stable and mainline kernel versions. The home page shows a plot of the number of interfaces depending on the version of the kernel. The results so far obtained for the two architectures: x86 and x86_64. Support for arm architecture is planned in the near future. Also planned testing of other kernel configurations (allyesconfig, etc.).

Basic tools ABI Dumper and ABI Compliance Checker, previously developed in the ROSA laboratory to track ABI changes in C libraries, have required significant improvements to be able to analyze changes in Linux kernel. The problem was in the huge depth of data structures and a big number of kernel interfaces, so both tools required too much cpu time and RAM to operate. As a result, the tools now are faster in the analysis of large input objects.

Towards the Better Packages - Rpmlint News

Rpmlint is one of the major tools used by every ROSA maintainer to control package quality. This tool checks whether packages meet the distribution packaging policies. It is launched automatically at the end of every build performed in ABF. In addition, we constantly monitor ROSA repositories with Rpmlint andpublish results at the FBA site.

Packaging policies are not carved in stone and are subjected to changes as the distribution evolves. From time to time new ideas come to developers' minds about new useful checks that could be implemented. As a result, Rpmlint is also constantly evolving. Not long ago we performed a significant refactoring of its code and dropped some checks that are not relevant for ROSA anymore - such as invocation of ldconfig in post-scripts, definition and clean up of BuildRoot and so on. These checks have not been used for a long time, but they made the code larger and decrease the speed of the tool.

Besides code clean up, we have implemented some new interesting features in our Rpmlint.

First of all, we have implemented stricter checks for packages containing executable files or libraries with RPATH parameter. This parameter affects the order of folders where the search is performed by dynamic loader when looking for libraries during application start - the paths listed in RPATH are looked in the first order. This feature should be used with care, especially in libraries that can be used by other distribution components. In ROSA, if you need to use RPATH in your package, it is recommended to put all libraries which should be accessed using RPATH in a separate subfolder of the /usr/lib directory (it is recommended to name this subfolder in the same way as the package itself - e.g., /usr/lib/fglrx contains libraries specific to fglrx). If RPATH points to a directory which contains libraries not expected by maintainer, then application behavior can become surprising and unpredictable. A common example of such situation is the case when RPATH contains system directories such as /lib or /usr/lib. As the practice shows, adding these values to RPATH can have fatal consequences - for example, we have recently fixed a problem with Empathy which failed with segmentation fault on a system with Nvidia drivers due to RPATH set in libwebkit library (used by Empathy). From now on, Rpmlint automatically detects such situations at the end of package build and stops the build with a non-zero exit code if it detects a shared library or executable file that have RPATH containing /lib, /usr/lib, /lib64 or /usr/lib64.

Next, by request of our Russian localization team, we have added checks for untranslated names and comments in package desktop files. Localization of such files is very important since they are used to provide user with information about application that will be launched by clicking on particular icon in SimpleWelcome or RocketBar. Since these checks are used by Russian localization team only, we have not included them to the main branch of Rpmlint shipped with ROSA distributions. The checks are used only when launching tests at FBA and their results can be observed in corresponding sections of the site. For example, for the main repository of ROSA Desktop Fresh R1:

The same checks can be easily implemented for other languages if corresponding localization teams are willing to monitor and fix the whole set of desktop files.

Finally, Rpmlint can now check correctness of package suffixes. In ROSA, packages of every distribution version have their own suffix (to distinguish packages from different versions) which is automatically set by ABF when package is built. However, it sometimes happens when a package is not built in ABF but copied to repository manually from corresponding repository of a previous ROSA version. This is usually used in cases when packages have cycled build dependencies, and the easiest way to rebuild such package for a new distribution version is to use uts dependencies from the previous one. Normally such "manually added" packages are dropped from the repository as soon as they are no longer needed. However, it sometimes happens that maintainers forget to drop the old package so it remains in the repository like a garbage. It would be nice to automatically detect packages with wrong suffixes in repositories, and no this possibility is implemented in Rpmlint by means of non-standard-distsuffix check. The list of allowed suffixes is defined in Rpmlint settings, in the ValidDistSuffixes parameter.

WiFi and Broadcom - Handling the Errors

Two bugs related to error handling have been fixed by our developers in the proprietary driver for Broadcom WiFi adapters (broadcom-wl, a.k.a. broadcom-sta, a.k.a. wl). Both problems (#2146, #2667) lead to kernel crashes at boot time on the laptops of several our users.

By the way, not all major Linux distributions have these problems fixed at the time of writing.

LinuxCon Europe 2013 - About Data Races in the Linux Kernel

October 22, 2013 - Eugene Shatokhin, one of our developers, gave a talk devoted to data races in the Linux kernel modules at LinuxCon Europe (slides, notes for the slides).

Such errors could be very hard to find and their consequences may vary from negligible to critical. Hunting the races down is especially important for the Linux kernel, where, for example, the code of the drivers can be executed by many threads at the same time. Now add interrupts and other asynchronous events and remember that the synchronization rules for the data are not always fully described (if described at all)...

Most of the talk was about the tools that can detect data races in the Linux kernel. KernelStrider and RaceHound tools, Eugene was one of the main developers of, were covered in more detail.

KernelStrider collects data about the operation of the kernel component (e.g. a driver) under analysis in runtime. The information about memory accesses, allocations and deallocations, locks and unlocks, etc., is then analyzed in the use space by ThreadSanitizer (Google). The algorithm of searching for races is briefly described here.

KernelStrider may issue false alarms in some cases. For example, false alarms happen when a network driver turns off interrupts in hardware and then accesses some common data without the risk of conflicts with the interrupt handlers.

RaceHound tool allows to check the warnings about data races issued by KernelStrider and find real races among them. RaceHound works as follows.

- A software breakpoint is placed on an instruction in the binary code of the driver that may be involved in a data race.

- When the breakpoint triggers, RaceHound determines the address of the memory area which is about to be accessed by the instruction. Then it places a hardware breakpoint to track the accesses of the needed kinds (writes only or both reads and writes) to that memory area.

- A small delay is made before the execution of the instruction.

- If some other thread accesses that memory area during the delay, the hardware breakpoint will trigger and RaceHound will report a race.

That is, KernelStrider plays the part of a "detective" or an "analyst" here and narrows the range of "suspects" - the fragments of the code possibly involved in data races. RaceHound is then a covert monitoring system tracking these suspects. If it catches a suspect "red-handed", everything is clear, the "crime" (data race) is confirmed.

There were many questions asked both during the talk and after it. Among other things, the audience was interested in the following.

- Plans to support ARM (the mentioned tools currently work on x86 only) - may be later, not in the near future.

- Situations when KernelStrider misses data races - yes, this is possible in some cases, mostly due to how ThreadSanitizer works as well as due to sometimes inaccurate event ordering rules used.

- Support for suspend/resume in KernelStrider - yes, KernelStrider operates during suspend and resume too.

- Support for analysis of the kernel proper rather than the modules in KernelStrider and RaceHound - not implemented at the moment.

- Instrumentation of the code to be analyzed during the compilation rather than during its loading as KernelStrider does now - may be beneficial, it is actually one of the future directions of the development.

- and so on.

The developers from Intel actively participated in the discussion of the races found by the tools mentioned above. It is no surprise because these races were found in the network driver e1000, created by Intel. A strange thing became obvious during that discussion: it is a common practice in the network drivers not to use synchronization in some cases even if a race may happen as a result (and the races were actually found there). This is the case, for example, for NAPI and some of the functions involved in data transmission. This is probably to avoid performance losses due to locking but the estimates of such losses as well as the guidelines how to avoid problems there are yet to be found.

It seems that many kernel developers share the following attitude to the data races:

- Have you observed any particular problems due to that race? Has anything crashed or otherwise worked wrong?

- Not yet.

- Oh, well.

And nothing happens then.

Reasonable? Perhaps, but if one remembers this article, for example, the reason becomes less certain.

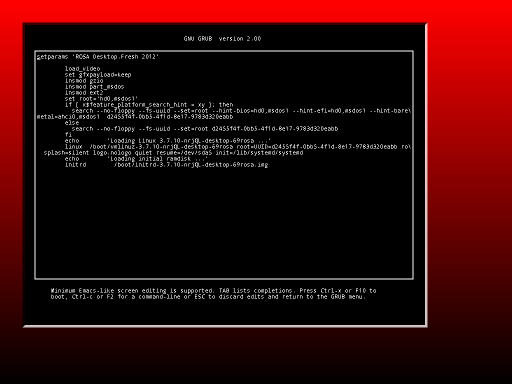

More GRUB2 upgrades and bugfixes

Out developers continue upgrading the GRUB2 bootloader.

There were 14 patches made and token into the upstream.

Updates Builder – Pull Requests and automatic correction of build failures

Repositories of ROSA Desktop Fresh R1 contain more than 15,000 packages that can satisfy needs of almost every user. However it's not easy to maintain such a large set of applications, libraries and other software components. The thing is that new versions of many programs are released very frequently and maintainers have to constantly track them, build for ROSA, test and decide if it makes sense to update ROSA package to a newer version. To automate these tasks, we use the Updates Builder tool that constantly monitors upstream and automatically builds new versions in ABF.

Initially, the way of Updates Builder work was pretty simple – to build a new package version, it used a spec file from the previous one with replaced version value and tarball name. This approach turned to be very effective – it is common for newer versions of many packages to contain only minimalistic changes with respect to the previous ones, so there is no need in serious spec file modifications to build a new package. As a result, during the first three months of Updates Builder usage we have updated several hundreds of packages by its means. Нowever, during these three months we discovered more ways to improve effectiveness of the tool.

It turned out that new versions of package often fail to build due to trivial issues – for example, new version has a couple of new files not mentioned in spec, require new BuildRequires entries and so on. These changes lead to build failures but can be fixed by small modifications of the spec. So why not to teach the tool to automatically apply typical modifications?

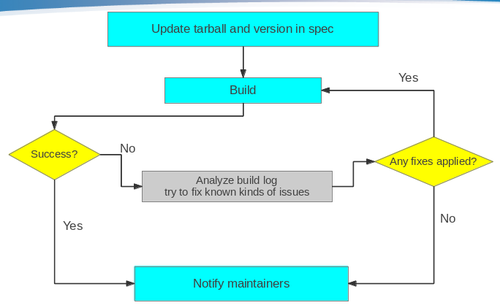

To implement such a feature, we have developed special scripts to analyze errors occurred during the package build. The scripts are launched automatically in case when a build triggered by Updates Builder fails. If the scripts detect one of the errors known to them, they automatically fix the spec file to avoid these errors and rebuild the package once again. This process can be repeated several times, since as old errors disappears, the new ones are introduced. Finally, Updates Builder workflow in ROSA looks like the following:

Currently the scripts try to fix the following kinds of errors:

- Unneeded patches

- Reverse (or previously applied) patch detected

- Missing build requirements on Perl modules

- Can't locate <perl_module> in @INC

- Added or removed files:

- File not found / File not found by glob

- Missing file specified in %doc

- Installed (but unpackaged) file(s) found

- Rpmlint errors

- debuginfo-without-sources and empty-debuginfo-package

Note that different heuristics are used to fix such issues and we can't guarantee that the fixes applied are always correct, even if the package was successfully built after them. For example, to avoid debuginfo-without-sources and empty-debuginfo-package errors we currently disable debug package creation. However, it could be more correct to mark the package as architecture-independent (noarch) or add some build and compiler flags without which debug information is not generated. So before merging updates suggested by Updates Builder to official repositories maintainers should first analyze the changes applied; it is possible that some additional corrections should be made.

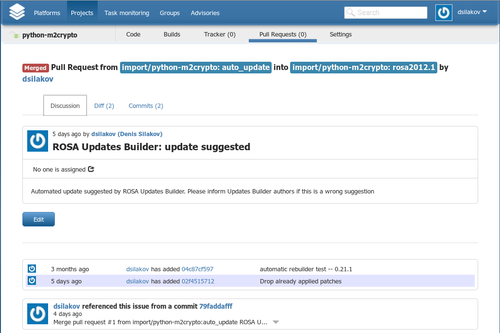

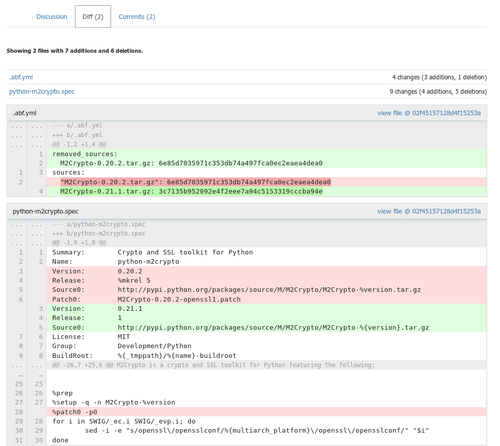

By the way, the process of analyzing updates suggested by Updates Builder and merging them to official repositories gas become much more convenient. Up to now, Updates Builder only sent emails with build results and then maintainers had to manually investigate changes in the auto_update branch of Git repository and merge these changes to official branches. From now on, in case of successful build of a new package version a Pull Request is automatically created in ABF. Maintainers are able to visually analyze all modifications and accept them by means of a single button.

Urpmi, rpmdrake and automated dependency resolution

The specific of ROSA repositories (as well as of repositories of many other Linux-based systems) is that dependencies of some packages can be resolved in multiple ways - since sometimes there are several packages providing the same feature. For clarity, let's look at example.

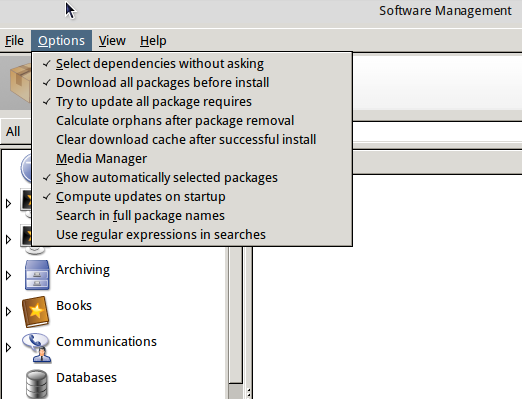

We have a Tesseract OCR in our repositories which requires language-specific packs to work with certain languages. Tesseract currently supports more than 70 languages and for every language a separate data package exists in our repositories. But it is unlikely that user will need all these languages. For most users, it is enough to provide support for their native language. So then installing tesseract, we should somehow decide which language packs to install. To indicate that we have a choice, the following trick is performed on the package level: the tesseract package itself requires tesseract-language, and every package with language-specific data provides tesseract-language. When installing tesseract, urpmi (or Rpmdrake) detects that tesseract-language requirement can be resolved in multiple ways. In f this is the case, urpmi and Rpmdrake either ask user for the choice or perform selection automatically (if --auto or corresponding checkbox in Rpmdrake settings is specified).

By the way, in the recent versions automatic dependency selection in Rpmdrake is turned on by default.

GRUB2: new options - terminal window size and position

Our developers continue to improve GRUB.

This time they have made new options. Now you can set terminal window size and position in the theme's file.

Также добавлена возможность изменять рамку терминала - пустое пространство, оставляемое со всех сторон в окне терминала. Also you can change terminal border width. (free space from each side inside the terminal window)

terminal-left: "50" terminal-top: "50" terminal-width: "800" terminal-height: "600" terminal-border: "10"

The patch has been approved by the upstream and added to the source code.

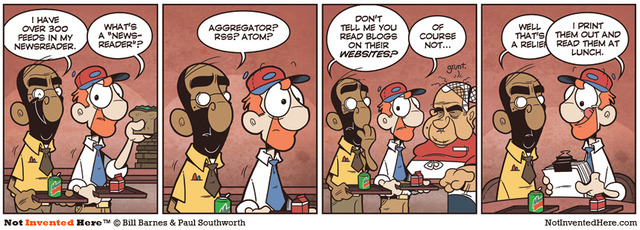

ROSA Planet gets rolling release

"ROSA Planet" switches to rolling release scheme

Our regular readers should remember our Technical bulletin called "ROSA Planet", aimed to readers with good technical and IT skills. Surely it was good, but: it was the old-fashion magazine format, it had one-month release schedule and it was wrapped in PDF. Those things are not compatible with modern fast, mobile and super cool life style.

Good news everyone!

We are switching to "ROSA Planet 2.0" which will be almost as rolling release in the way that new articles will appear right after they are ready, always keeping fresh and cool. In other words — this is classical blog, which you may read in a chronological, sequential or calendar mode. You may also subscribe to the Atom RSS here http://wiki.rosalab.ru/en/index.php?title=Blog:ROSA_Planet&feed=atom

You may easily navigate to the blog by clicking the link ROSA Planet on the left side of the main page of our wiki.

And lest you miss the most interesting articles, once in a month we'll be releasing a special digest issue with additional PDF file to those users who prefer reading it offline.

Happy reading!

And do not hesitate to use good old-fashion e-mail mode to contact us at info@rosalab.ru.

GRUB2: improving of option and bugfixing

Our developers continue to improve GRUB2. Some bugs have been found and fixed. Also one option have been improved. Patches were sent to the upstream and accepted.

The first complete theme tutorial for GRUB2

Our developer has written the first complete theme tutorial for GRUB2.

This tutorial is step-by-step detailed description of all graphical theme creation stages. Every option is listed with description of it's purpose, possibilities, limitations and features. The material contains examples of theme files and screenshots of the results.

You can make your own theme with precision up to a pixel with formulas and schemes given in the tutorial.

This tutorial is suitable for both beginners and those who had deal with GRUB2 themes.

You can see this tutorial at our wiki. Also this link can be found at the official GRUB2 documentation site.

PkgDiff 1.6 - Added compatibility check

PkgDiff tool is developed to visualize changes in files and attributes of all types of Linux software packages and is intended to be used by maintainers and QA-engineers in order to verify changes and prevent unintentional changes, which can break other packages in the repository. One of the most significant elements in the structure of the Linux distribution are system libraries. Average Linux distribution contains several thousand libraries and they have a huge number of internal dependencies. For this reason, an update of a library can break the build or behavior of other libraries and eventually lead to malfunction of user applications.

In the new version of the PkgDiff tool (1.6) we have added the ability to check the compatibility of changes in libraries. This was made possible due to a new ABI Dumper tool, which can extract information about the library ABI from the debug-files, which can then be analyzed by the ABI Compliance Checker tool. To check the compatibility of two packages A and B (old and new versions of a package), the user should pass appropriate debug-packages to the tool and run it with the additional -details option:

pkgdiff -old A-debuginfo.rpm -new B-debuginfo.rpm -details

We have added the new ABI Status section to the output report, which shows the backward compatibility level of the library ABI. To view detailed compatibility reports one can find the Debug Info Files table in the report and follow the links in the Detailed Report column.

ABI Dumper - A tool to dump ABI from DWARF debug-info of an ELF-object

When you compile ELF-object, such as shared library or kernel module, with the additional -g option, the debug information is inserted in this object. This information is typically used by the standard debugger gdb to provide the user with additional features when debugging the program. One can read this debug-info with the help of -debug-dump option of the readelf or eu-readelf utility (from elfutils package).

An important part of any ELF-object is its binary interface (ABI), provided for its client applications to use. In essence, it is a representation of the object's API on the binary level (after compilation). When you update the object in the distribution, it is important to maintain ABI backwards-compatible, otherwise it may cause a malfunction or crash of applications. Changes in the ABI are usually caused by corresponding changes in the API of an object or by changes in the configuration and compilation options. To track changes in the ABI of an object we use the ABI Compliance Checker tool. But until now it could only analyze shared libraries by extracting information from header files.

In order to expand and simplify usage of the ABI Compliance Checker tool, we use ABI Dumper tool for extracting ABI information from the debug-info of an object. Now, with the help of this tool one can track changes not only in the ABI of libraries, but also, for example, in the ABI of kernel modules. A typical use case is to create ABI dumps for the old and the new version of an object:

abi-dumper libtest.so.0 -o ABIv0.dump abi-dumper libtest.so.1 -o ABIv1.dump

and then compare them:

abi-compliance-checker -l libtest -old ABIv0.dump -new ABIv1.dump

Unfortunately, this approach has its drawbacks. Perhaps the main drawback is the inability to perform some compatibility checks. For example, there is no possibility to check for changes in the values of the constants (defines as well as const global data), since their values are inlined at compile time, and not presented in the debug information of the binary ELF-object. In general, there can be checked about 98% of all compatibility rules. Another disadvantage is the long time required to analyze large objects bigger than 50 mb. But one can use the dwz utility to compress input debug-info.

Packaging-tools - useful tools for maintainers

In ROSA is available packaging-tools — set of scripts for maintainers, which was firstly developed in Ark Linux.

Set includes spec-files generator for arbitrary packages — vs — which creates a blank of spec-file and opens it in vim (or in editor, which was specified in variables EDITOR or VISUAL). Also are available special generators for spec-files of packages specific types:

- vl

- for libraries

- vp

- for modules Perl

- vj

- for Java-packages

These generators create blacks of spec-files, which take into account specific of every concrete type of package (for example, nessesary subpackages are created for libraries).

Another useful script is e, a simple wrapper for gendiff. If you want to prepare patch for some package, you need to unzip archive with source code and edit nessesary files with e. In fact, this script will call an outside editor (specified in variables EDITOR or VISUAL; default is vim), but before it happend, it will save source file with suffix rosa2012.1~ (suffix can be edited with option -s). As soon as you have finished, cause gendiff to create patch.

This is an example, how you can prepare patch for file test.c for source code someapp-1.2.3 with editor geany

$ tar xzvf someapp-1.2.3.tar.xz $ cd someapp-1.2.3 $ export EDITOR=geany $ e test.c $ cd .. $ gendiff someapp-1.2.3 .rosa2012.1~ >my.patch

This way seems to be a bit complicated, but it is really suitable, if you need to prepare small patch for large source code file.

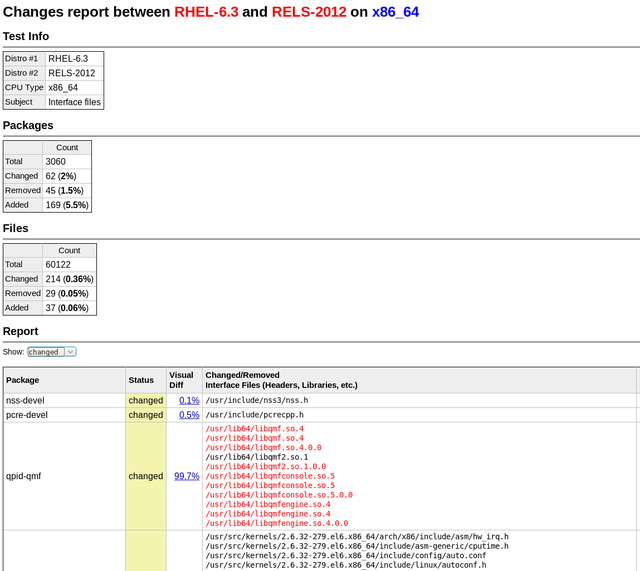

DistDiff - visualizing changes in Linux Distros

It isn't really easy to release stable updates for Linux distribution. You have to check, that all applications will work properly after update. They can be divided into two big groups: basic (from distribution) and personal (installed by user from other sources). Basic apps could be easily checked by installing them into updated distribution. Then they are checked manually. But we don't know anything about applications from second group. So, you have to not only check efficiency of them, but have to watch list of all changes in packages.

Compatibility of changes in system libraries can be checked with tool ABI Compliance Checker. To check changes in other packages we have developed tool DistDiff. It helps visualize changes in all packages of distribution and quickly look through them for violations of the compatibility. You have to input into tool only two directories: with old and new packages. In default mode tool checks changes in interface files (libraries, modules, scripts and others), which can potentially affect on compatibility. It can also check all files with option "-all-files".

It is based on tool we have developed earlier PkgDiff. It was also developed to visualize and compare changes in packages.

GRUB2 - memory leakage and lot bugs in progress bars fixed

Our developers tends to increase functionality and upgrade any program they deal with. Thats why they have created 5 more updates for the GRUB2 bootloader. Patches were sent to the GRUB2 upstream and accepted.

MagOS Linux based on ROSA Marathon

It is nice to have ROSA on your own machine, but sometimes many of us meet the situation when it is necessary to work on other’s computers where you can’t change the operating system. In many cases LiveCD will help, and ROSA provides possibility to boot into Live mode from flash or CD/DVD. However, though been very useful in many aspects, the Live mode suffers at least from the following issues:

- you can save results of your work on a hard drive of computer, but it is not easy to save them of the flash drive from which you have booted;

- there is no possibility ti change system settings, save them and use during the next boot;

- finally, to burn ISO image on flash and boot into the Live mode, you should preliminary move all files from the flash to somewhere. Even if you have a flash with dozens of Gb of free space and ROSA iso image only needs 1.5 Gb.

All these disadvantage are absent in MagOS Linux. Traditionally, MagOS was based on Mandriva, and some time ago ROSA-based variation was introduced.

Distribution builds are available here: http://magos.sibsau.ru/repository/dist/. The build based on ROSA Marathon has 2012lts suffix (at the moment of writing this paper, the latest build was MagOS_2012lts_20130228.tar.gz).

The build is actually a tarball with three folders — boot, MagOS, MagOS-Data. To install MagOS on your flash drive, you should unpack the tarball and place these three folders in the root of your flash. There is no need to remove existing data from flash, but remember that the system needs about 2Gb of space.

Note that it is necessary to mount flash without 'noexec' option., to be able to launch scripts directly from it (this is required to make a flash with MagOS bootable). ROSA uses 'noexec' option by default, so you will have to mount your flash stick manually from the command line.

In order to do this, launch console with root privileges (e.g., in KDE press Alt-F2 and «kdesu konsole») and execute the following command:

mount -o remount,exec /dev/sdx /mount_point

(here /dev/sdbx is a device file corresponding to your flash drive, /mount_point is a directory where the flash is mounted).

Or just remount you flash stick:

mount -o remount,exec /dev/sdb1 /mount_point

Now copy boot, MagOS, MagOS-Data and folders to /mount_point, go to /mount_point/boot/syslinux folder and launch install.lin.

You will be asked if you are sure to make the device bootable. To agree, press enter.

Now leave the /mount_point folder and unmount the flash by 'umount /mount_point' command.

Now reboot your machine and boot from flash. You should see MagOS boot selection menu with several boot options.

The system is based on ROSA, but by default a standard KDE is used, without SimpleWelcome and RocketBar. Besides KDE, one can load Gnome or LXDE; to do this, logout and choose appropriate session type in the menu at the bottom of the screen. Remember that default user/password are user/magos.

MagOs Linux can be installed on flash not only in Linux, but in Windows, as well. It is also possible to install the system on a usual machine — either as a standalone system or inside existing Windows partition.

More details can be found at MagOS wiki (http://www.magos-linux.ru/dwiki/doku.php), but unfortunately for our foreign users, all documentation is in Russian. However, MagOS Linux is very easy to use, in most cases you won’t need any additional documentation at all. And for getting started, the documentation on wiki should be enough — though the text is in Russian, the commands to be executed should be clear for any user. Also note that the thing you would likely want to do after the boot first is to change locale of the system, which is set to Russian by default. To do this, just go to KDE CC, find locale settings and choose English (this option is available by default; to choose other languages, you will have to install appropriate localization packages — at least kde-l10n ones).

ROSA Marathon is included in the Linux Application Checker knowledge base

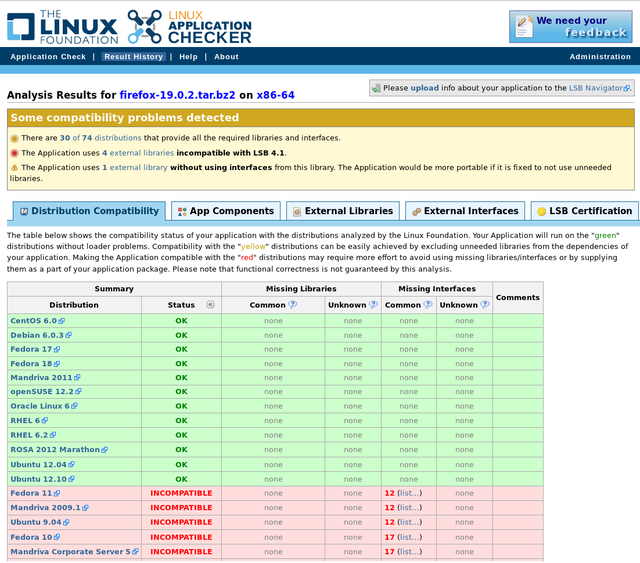

The Linux Foundation consortium has announced an updated version (4.1.8) of the Linux Application Checker (AppChecker) — a tool aimed to analyze compatibility of applications with different Linux distributions and check their compliance with Linux Standard Base (LSB). Currently AppChecker database for the x86 platform contains data about 84 distributions, now with ROSA 2012 Marathon among them. We are going to continue our collaboration with Linux Foundation engineers and provide them with necessary information about ROSA releases.

AppChecker analyzes compatibility of application with particular distribution by comparing a set of shared libraries and binary symbols required by application with sets of libraries and symbols provided by the system. Satisfaction of such requirements is a necessary condition of successful application launching in the operating system — if some library or symbol is absent, then application cannot be launched in the distribution. The list of libraries and binary symbols required for the application is obtained by analyzing application binary executables (in ELF format) and shared libraries. To be sure, only those requirements are taken into account that are not satisfied by libraries of the application itself. AppChecker actually emulates work of the system loader during application launch; if some required libraries or symbols are missing in the system, the application will simply fail to start (or will silently fail during its work, if lazy binding is used).

One should note that AppChecker contains data only about limited set of widespread libraries, not about all those that exist in distribution repositories. More precisely, it is guaranteed that the information is correct for libraries from the 'approved' list (http://linuxbase.org/navigator/browse/rawlib.php?cmd=display-approved) which currently contains almost 1,500 entries, while repositories of most distributions (in partiular, ROSA) contains several thousands of libraries. If application requires library not included in the approved list, AppChecker will honestly report that it can’t say if this library is present in certain distributions or not.

As an example usage, one can see that the Firefox build downloaded from http://mozilla.org (of the version 19.0.2 at the moment of writing this article) cannot be used in old systems such as Fedora 10 or Ubuntu 9.04.

ROSA tools in upstream

While creating ROSA, we not only develop and adapt different packages for our distribution, but also design new tools for other developers.

One of such tools is API Sanity Checker, which is aimed to automatically generate tests for C++/C libraries.

For its work, the tool requires only header files with declarations of library functions (and all necessary data types). Using this information, API Sanity Checker generates tests that calls every function from the library with proper arguments. Usually these automatically generated tests are used as a template for developing more complex sets (with enumeration of different values of parameters, their combinations, etc).

The tool is absolutely free (the source code can be found here) and can be used by everybody. For example, not so far API Sanity Checker has been integrated in the development cycle of the GammaLib library. As a result of the tool's work, 11 errors were found and fixed in that library (https://cta-jenkins.irap.omp.eu/job/gammalib-sanity/).

We recommend all upstream developers of different C/C++ libraries to follow this successful example. The resources to create test set is minimal, but number of errors found can be really great.